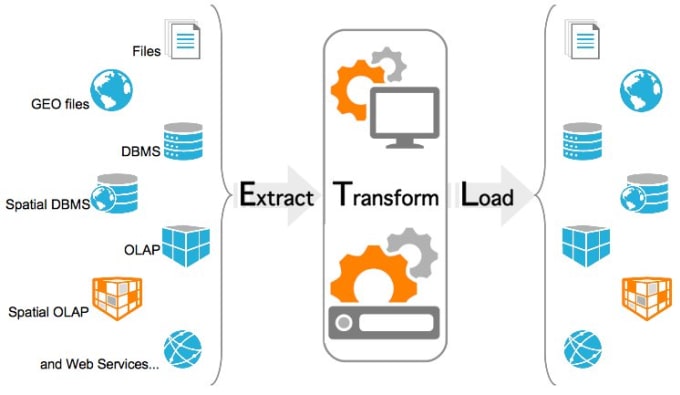

doc_md = dedent ( """\ # Load task A simple Load task which takes in the result of the Transform task, by reading it from xcom and instead of saving it to end user review, just prints it out. """ ) load_task = PythonOperator ( task_id = 'load', python_callable = load, ) load_task. This computed value is then put into xcom, so that it can be processed by the next task. doc_md = dedent ( """\ # Transform task A simple Transform task which takes in the collection of order data from xcom and computes the total order value. """ ) transform_task = PythonOperator ( task_id = 'transform', python_callable = transform, ) transform_task. This data is then put into xcom, so that it can be processed by the next task. In this case, getting data is simulated by reading from a hardcoded JSON string. doc_md = dedent ( """\ # Extract task A simple Extract task to get data ready for the rest of the data pipeline. loads ( total_value_string ) print ( total_order_value ) # extract_task = PythonOperator ( task_id = 'extract', python_callable = extract, ) extract_task. xcom_pull ( task_ids = 'transform', key = 'total_order_value' ) total_order_value = json. xcom_push ( 'total_order_value', total_value_json_string ) # def load ( ** kwargs ): ti = kwargs total_value_string = ti. # pylint: disable=missing-function-docstring """ # ETL DAG Tutorial Documentation This ETL DAG is compatible with Airflow 1.10.x (specifically tested with 1.10.12) and is referenced as part of the documentation that goes along with the Airflow Functional DAG tutorial located () """ # import json from textwrap import dedent # The DAG object we'll need this to instantiate a DAG from airflow import DAG # Operators we need this to operate! from import PythonOperator from import days_ago # These args will get passed on to each operator # You can override them on a per-task basis during operator initialization default_args = total_value_json_string = json. See the License for the # specific language governing permissions and limitations # under the License. You may obtain a copy of the License at # Unless required by applicable law or agreed to in writing, # software distributed under the License is distributed on an # "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY # KIND, either express or implied. The ASF licenses this file # to you under the Apache License, Version 2.0 (the # "License") you may not use this file except in compliance # with the License. Enabling the extraction of data from multiple sources and transforming the data as per the business needs and writing the results into the desired target location is what is primarily achieved. See the NOTICE file # distributed with this work for additional information # regarding copyright ownership. Extract, Transform, and load (ETL) operations are what forms the backbone of an enterprise data lake. # Licensed to the Apache Software Foundation (ASF) under one # or more contributor license agreements.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed